I heard people on the internet like to look at pictures of cats but I don’t own a cat, so please look at this jack-o-lantern from the night they had scuba divers doing pumpkin carving underwater at the Long Beach Aquarium.

The truth to how I get business – I answer the phone

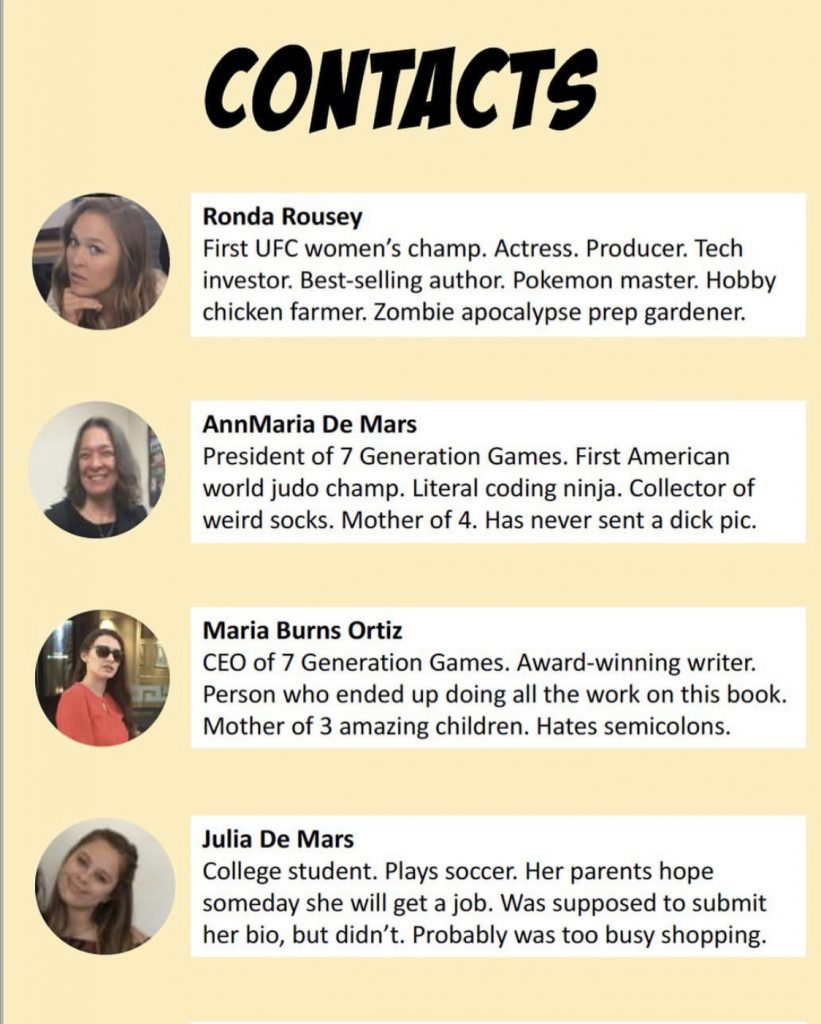

I get asked often how I get opportunities, whether it is for statistical consulting contracts, customized educational game development or as a keynote speaker and the truth is a little embarrassing – I answer the phone, or, less frequently, an email.

Someone says, “My friend, Esmeralda recommended you. I need a … “

If what they need is six months out, it can probably fit in our schedule, and so we talk terms like how much they have in their budget, if they want me to come on site or if everything can be done virtually.

Sometimes, I wonder if I should look into SEO, or speak at more events, but the truth is that I don’t want to . If you see me somewhere, I really want to be there, like at the American Association on Teaching and Curriculum conference (a totally awesome bunch of people) or TEDX Fargo (who had any idea that Fargo could be so cool? I’m not talking about the weather, either, it was in July). My TED talk was on What we talk about at the secret world champions meetings.

Fifty years ago, I argued that the most important P was a good product

Fifty years ago, I was an undergraduate at Washington University in St. Louis. Yes, I started college at 16, but still that was more than half a century ago. Despite my advanced age, I can remember an argument in my marketing class where we were doing a computer simulation (using a mainframe, of course), changing different values in our marketing strategy. It was for some soft drink brand. The guys in my group (it was almost all men in the business school back then), tried to shout me down when I said product was the most important thing, because it was just soda, for God’s sake. The professor finally came over and agreed with me, that even if your advertising could get people to buy your product once, if it tasted like warm piss, they were not going to come back.

Those marketing bros were probably the reason I went into engineering – not really, I just liked statistics and programming.

I still think I’m right about the product part, though.

Our educational games costs from $5,000 to $30,000. For statistical consulting or software development, it’s $100 an hour and the typical contract is around 1,000 hours across multiple years. People aren’t contracting for something that costs over $10,000 because they saw a good post in Pinterest.

Yes, I could charge a lot more than $100 an hour, but that’s a post for another day.

The short version is that we give people excellent value for their money. If they aren’t happy, they can go look and see what quality they can get elsewhere for their money and when they find out, they come back to us and are happy. Price – that’s the second P in the 4Ps of marketing, if you remember.

So, I guess I’m a bit of a disappointment to people who ask my marketing secret because it just boils down to this – be excellent at what you do, and then answer the phone.

It’s Not Bragging If You Can Back It Up

This is a quote from my old judo coach, and also from my TED talk. I must have missed school that day they lined all the girls up and told them they were supposed to have imposter syndrome and not say they were excellent or that they were worth what they charged and more.

I don’t just answer the phone – like the AATC meeting and TEDx Fargo and Avance Próximo (which was also totally awesome), I do go to events if I really want to be there, but I never go just to present a paper, give a talk or network.

That’s my secret of ‘promotion’ (another P), if I’m giving a talk, it’s not the 15th time I’ve delivered that same message. It’s something I’ve put some thought in for this particular audience. If I’m back to blogging after a couple of years hiatus (hey, give me a break, this blog goes back 10 years, I think there are 94 screens listing blog post titles), it’s because I have some thoughts based on what people have asked me. I’m probably not going to add to the approximately 1,865,437,906 posts on AI, although I have written a few things on the topic, like in our LinkedIn newsletter, The Learning Game.

I did actually make. a game about making games that you can play on your computer, if you like, so I am not a total slacker.

Place? The fourth P, is right here downstairs in my house. That’s where all my stuff is, the chair my dog sleeps in and where my grandchildren come to visit. Occasionally, place is Belize or Halifax or London because I happen to be visiting family or vacationing with family and my only real vacation, as my children will tell you, is a laptop with a view.

So, there it is. My marketing plan. I told you it was terrible in advance so if you are disappointed, you have no one to blame but yourself.

]]>