Logistic regression proves I have no soul

The #reverb10 prompt for December 3rd was to write about a time when you felt most truly alive in 2010. There were more prompts, about what you wonder about and other examining-your-soul type of introspection. This isn’t that kind of blog. I don’t think I’m that type of person. For the record, the time I feel most alive is when I am with my family but I wasn’t the least bit interested in writing about how much I love my family right now. In fact, I was very interested in logistic regression.

Should YOU wonder about logistic regression? Well, that depends.

- Do you have a continuous, numeric dependent variable? If yes, do something else, maybe multiple regression.

- Is one of your variables a dependent variable? If no, do something else, maybe log-linear modeling.

- Do you have more than one independent variable? If not do something else, usually either a chi-square (if both your variables are categorical) or a t-test if one of your variables is continuous.

Logistic regression is the statistical technique of choice when you have a single dependent variable and multiple independent variables from which you would like to predict it.

With logistic regression, the dependent variable you are modeling is the PROBABILITY of the value of Y being a certain value divided by ONE MINUS THE PROBABILITY. Let’s start with the simplest model, binary logistic regression. There are two probabilities, married or not. We are modeling the probability that an individual is married, yes or no. [Logistic regression is NOT what you would use to model how long a marriage lasted. That would be survival analysis.]

The logistic regression formula models the log of the odds ratio. That is

The probability of y =1 / probability of y = 0

So, the left side of your equation is

ln(p / (1- p) )

**** Very, mega- super-important point here – the p in this equation is NOT the same old p as in p < .05. No, au contraire. Completely different. This is the probability of event = 1. For example, the probability of being married. 1-p then would be 1 – the probability of being married. Yes, that second number is the same as the probability of being single. You aren’t missing anything.

I was, in this post going to use the probability of being a dumb-ass but some people have written and told me that I am too hostile for a statistician so I am trying to mend my ways, it being around the holidays and all.

The right side of the equation is the same old ß0 + ß1X1 + …ßnXn

that you are used to with Ordinary Least Squares (OLS) regression also known as multiple regression or multiple linear regression, or, if you are a complete weirdo, Monkey-Bob .

The ODDS RATIO is

The probability of y =1 / probability of y = 0 when x =1

divided by

The probability of y = 1/ probability of y = 0 when x = 0

I presume the only reason you have read this far is that you have some deep-rooted need or desire to understand logistic regression. An example will help. I have discovered lately that I love my husband for a very important reason. He is not a dumb ass. I have had multiple husbands (not simultaneously, that would be polyandry and illegal in most states and immoral according to certain anal-retentive religions) what they all had in common, other than the obvious being married to me, is that they were all in technical fields and pretty good at what they did. Let’s go with the hypothesis that people who are in a technical field are more likely to be married. Further, let’s say that we have sampled 100 people in computer science and 100 people in French literature. We find that 90 of the computer scientists are married and 45 of the French literature people.

So, if the probability of marriage is 90/100 and the probability of not married is 10/100 then the odds ratio of 9:1 for the computer scientists. = 9

For the French literature people, the probability of marriage is 45/ 100 and the probability of not being married is 55/100 = .818

So, 9/.818 = 11.00

This tells you that the odds of a computer scientist being married versus single are 11 times that of a French literature professor. Also, that you should study computer science instead of French.

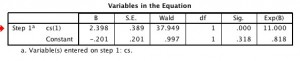

If you really had nothing else to do in your life and wanted to run this using SPSS just to see if I was correct (really, now!) you would get this output.

Gasp! The value of ß0, that being our constant, is -.201 . The inverse of the log is Exp(x) also shown as “e to the x”. This is a function in SPSS, if you want to double-check. Also a function in SAS, Stata and Excel, but NOT on the calculator on my iPhone. Steve Jobs should feel shame.

The value of Exp(-.201) = .818 – the odds for French literature people.

The value of Exp (2.398) = 11.00 – the odds ratio for computer scientists versus French literature whatever you call them (unemployed would be my guess).

In interpreting a logistic regression analysis you want to look at the significance of the parameter estimates (.000) and the parameter estimate, in this case the ß = 2.398. A positive coefficient says that the dependent is MORE likely if the variable has the value in question. In SPSS, that value is shown in parentheses. Notice it says cs(1) – that means when cs has the value of 1, the outcome is more likely to occur. How much more likely? Look to your right. (On the table, in this blog post,not to your right in your room. What are you thinking?) The odds are 11 times greater for computer scientists than for French literature whats-its.

A really good reference if you want a plain language introduction to logistic regression is by Newsom . There are a lot of really bad references to logistic regression in very obscure language but I decided not to bother mentioning them.

The syntax for producing this table in SPSS is below.

LOGISTIC REGRESSION VARIABLES married

/METHOD=ENTER cs

/CONTRAST (cs)=Indicator(1)

/CRITERIA=PIN(.05) POUT(.10) ITERATE(20) CUT(.5).

Keep being hostile. I’m loving the writing style! It’s almost like reading an xkcd comic with some real depth to it.

Be hostile. It is SO MUCH more fun that way.

Oh, and thank you! I’m using logistic regression (for the first time) in my dissertation, and explaining it to the two qualitative people on my committee was going to be a challenge. Now I have some better ideas! (Please don’t ask why I have two qual people on my committee for a totally quant dissertation; sometimes you just get stuck….)