5 Basics of Consulting Success: Part 1

Of all the qualities necessary to be a successful statistical consultant, none is more important than communication, even if that communication is only with your future self.

Of all the qualities necessary to be a successful statistical consultant, none is more important than communication, even if that communication is only with your future self.

I’ll be speaking about being a statistical consultant at SAS Global Forum in D.C. in March/ April. While I will be talking a little bit about factor analysis, repeated measures ANOVA and logistic regression, that is the end of my talk. The first things a statistical consultant should know don’t have much to do with…

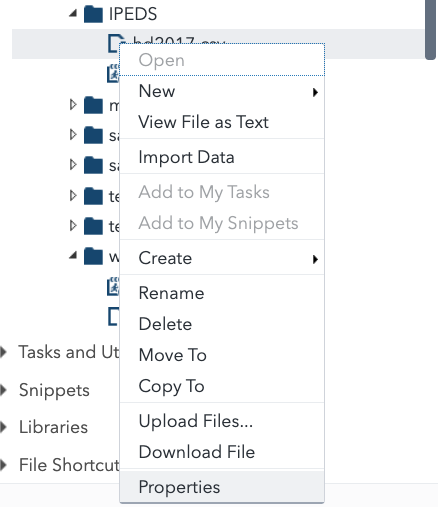

If you’re the kind of statistical consultant that has a range of clients, the ability of SAS to easily read lots of data formats is a godsend for you.

First of all, I want to draw your attention to this retraction in the Journal of the American Medical Association and mad props to Drs. Aboumatar and Wise and John Hopkins for doing the right thing in publicly retracting it. For the TL; DR crowd Someone who is probably now unemployed miscoded the study groups…

The famous statistician, F.N. (for Florence Nightingale) David was a professor at UC Riverside, where I earned my doctorate. My advisor told this story about her: We were on this dissertation committee – I forget if it was for biology or what, back then, this was a small campus so if you were in statistics…