Semi-programming as a way to simplify life

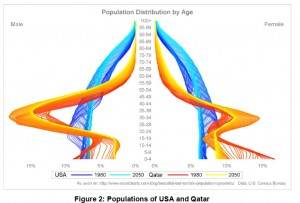

More about the Los Angeles Basin SAS Users Group (LABSUG) later, but I did want to mention one tangential point from the first presentation. It was on the Graphics Template Language (GTL). The first example was pretty cool, looking at the population pyramid by gender and year for the United States, then for Qatar at the same time. This is obviously hundreds of numbers to plot – age by gender for two countries for two years.

You can read how to make this here, in the paper presented at SAS Global Forum this year, Off the Beaten Path.

So, this plot was cool, but there were others that I looked at the plot and thought, “Gee, I’d just have an artist draw that.”

My take-away from that session was that you COULD graph anything anyway with GTL. Whether it was worth the effort or not is another story.

This brings me to “semi-programming” which is a term I just made up. (Not to be confused with semi-infinite programming, which is actually a thing, or quasi-programming, which is what I was going to call it until I found out that was another actual thing.)

Semi-programming is when it is simpler to program half the solution and do the other half some other way.

SEMI-PROGRAMMING EXAMPLE #1

Let’s say your boss wanted you to create a bubble plot of the relative sizes of all the 14 most popular animal species in the Santa Barbara Zoo and have the bubble size be relative to the mean weight of animals of that species. I’m sure you could do something with SAS or some other program with a gazillion programming statements to draw capybaras and parrots and other species on your chart. Or, you could do the bubble plot, find the 14 animal species photos on wikipedia, paste them on to the plot, dragging each photo to be the right size to just fit over that bubble. You could probably do the whole thing with three statements, a few minutes on google and some copying and pasting and be done in half an hour.

Spare me the question,

“What if you have to do it again?”

My initial reaction is, then you quit because your boss is an ass if she regularly asks you to do stuff like that. Seriously though, you could create a bunch with the semi-programming method in the time it would take you to do ONE purely programming.

SEMI-PROGRAMMING EXAMPLE #2

Not convinced? I had another example today. I want to merge my pretest and posttest groups. Because they are very valuable, I don’t want to lose a single subjects. Unfortunately, our subjects are children and due to very strict concerns about confidentiality, we have no personally identifying information. We merge the data sets by username, by grade, by school. In a few cases, the kids did the test twice. It’s online. They accidentally submitted the test, then realized they had skipped a questioned or two, so they opened it again and continued on the test. (There’s a reason we want them to be able to do that.)

So, we have two identical records for that child.

Sometimes, though, there are two usernames because kids at two different schools had similar usernames and one mis-typed it. So, your username might by MightyMan and mine is MightyMean and I accidentally left out the “e”. So, it is NOT the same kid twice. One way to see if it is a real duplicate versus an error is to sort the data sets by school, grade and then username and merge them – but what about if the student didn’t enter the name of the school , which a few did not? Or entered the wrong school? (Yes, that happened.)

I thought about all kinds of complicated solutions to this until it occurred to me that there were probably no more than 5 or 6 records that were really a problem. So, here is my semi-programming solution.

1. Write a program that does this:

- Sorts records by username, grade and school

- If there is no duplicate (it is the only student, username, grade combination) output that to one data set

- If there is a duplicate, output those records to the other dataset

2. Look at the 5 or 6 records that are duplicates. Identify which are the same student twice, in which case, delete the incomplete one. If it is a case of an error, see that MightyMean is really at school number 2, and enter an e in the username.

Since I expect no more than half a dozen of these, it will probably take me less than a minute to fix them manually, after I have my little program to spit out the problem children.

Semi-programming. Think about it.

While I agree with your major point, I typically find (especially with your duplicate records example) that if I do it that way, then I normally have to repeat an extremely similar process soon after, while if I do it in a fully automated fashion then I never use that damn script again.

I suppose the moral is, don’t automate until you have to do something for the second time.

Also on the subject of optimal mix between more reductive (programming) and more holistic approaches to a new problem, where the problem is even moderately complex, it pays to work through the solution manually _before_ starting to automate it. Almost always, edge cases and previously unrecognized facets will be discovered, as well as shortcuts and opportunities for refactoring.

Likewise, I have found that before working with a new dataset, it’s worthwhile to “simply” browse through the data, looking especially at the beginning and end, assessing maxima, minima, gaps and outliers, and other patterns or features of interest, _before_ proceeding with automated analysis.

The moral is don’t automate until you have to do it for the second time- I think that is a perfect moral!

As for Jeff’s approach, I wholeheartedly agree. That was going to be my first point in a paper on macros that I never got around to writing – make sure it runs as regular code first. I’m also with you in taking half an hour or so in eyeballing the data. I DID write a couple of macros for that, to produce mean, minimum, maximum, print the first five rows, etc.