Random non-parametric thingies: This is your brain on stats

Here is how the Wald statistic works: You divide the maximum likelihood coefficient estimate by its standard error and square the result.

If you wanted to be really specific about it, what you are dividing is the difference between the obtained coefficient estimate and your hypothesized estimate. I would say, though, that 99% of the time your hypothesis you are testing is zero, that is, that the independent variable has zero effect on the outcome variable. Since the coefficient estimate minus zero is the coefficient estimate, it is actually simpler, although somewhat less accurate, to state it the way that I just did.

In my experience, people who use discriminant function analysis and logistic regression usually differ in their intent. Discriminant function analysis attempts to sort people into two (or more) groups. Logistic regression predicts the probability of an individual being in a specific group.

People who use discriminant function analysis are often interested in predicting, for example, who will drop dead of a heart attack and who won’t. If they find that 80% of those who drop dead can be predicted correctly, and 77% of those who don’t can also be predicted correctly using a combination of education, the Selye Stress Scale and how many times a year the patient eats liver with onions, then they are happy. (Topic for future research – why would anyone eat liver? It tastes totally gross.)

People who use logistic regression are often almost as interested in the relative effects of the predictors as they are the overall model. So, they are happy to know that the Pseudo-R is .35 but they are at least as interested in knowing that the coefficient for Stress is positive and substantially higher than education, while the coefficient for liver (no matter how gross it may taste) is non-significant.

From a statistical standpoint, the major difference between discriminant function analysis and logistic regression is that discriminant function analysis makes a lot of assumptions about the distribution of the independent (i.e., predictor) variables, specifically that these are normally distributed and linearly related to the dependent variable. Logistic regression does not make these assumptions.

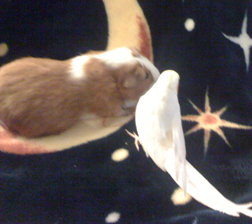

So, for the person on SAS community who said that for the next Los Angeles Basin SAS Users Group they would like a discussion of non-parametrics so easy a hamster could understand it (BYOH – bring your own hamster) – this was the best I could do on a Friday afternoon.

And yes, I do know that is not a hamster, but all I had hanging around was a guinea pig named Edward G. Robinson and a spare cockatiel.