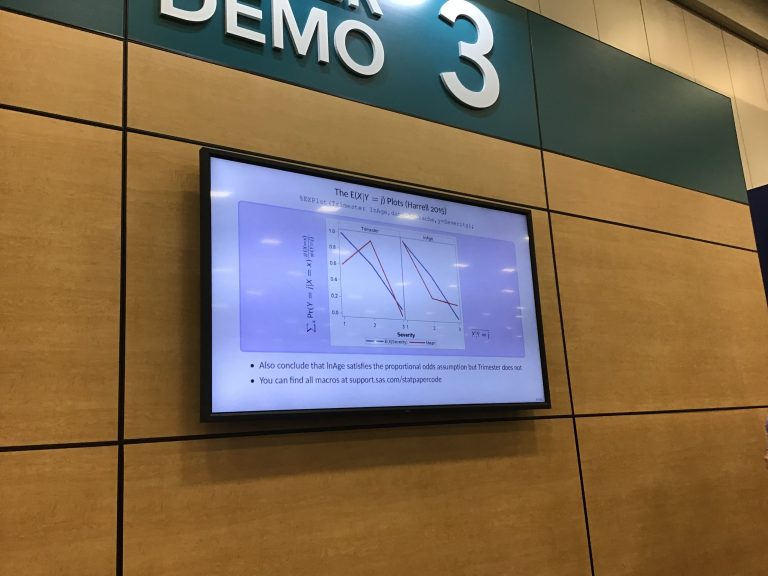

Fixing the transcoding error in SAS

Here is why I am still not a 10x developer despite using AI … but first, the answer to your problem, which is probably how you found this blog in the first place. This answer was courtesy NOT of any generative AI program but from reading the SAS documentation. Or, as we used to say…